Multi-Stage Thermal-Triggered VLM Framework for On-Device Eating Detection and Caloric Estimation

Published in International Symposium on Wearable Computers (ISWC) (In Submission), 2026

Summary: We develop a resource-efficient, multi-stage sensing and inference pipeline that enables real-time eating detection and caloric estimation on edge devices under strict compute and power constraints.

Abstract

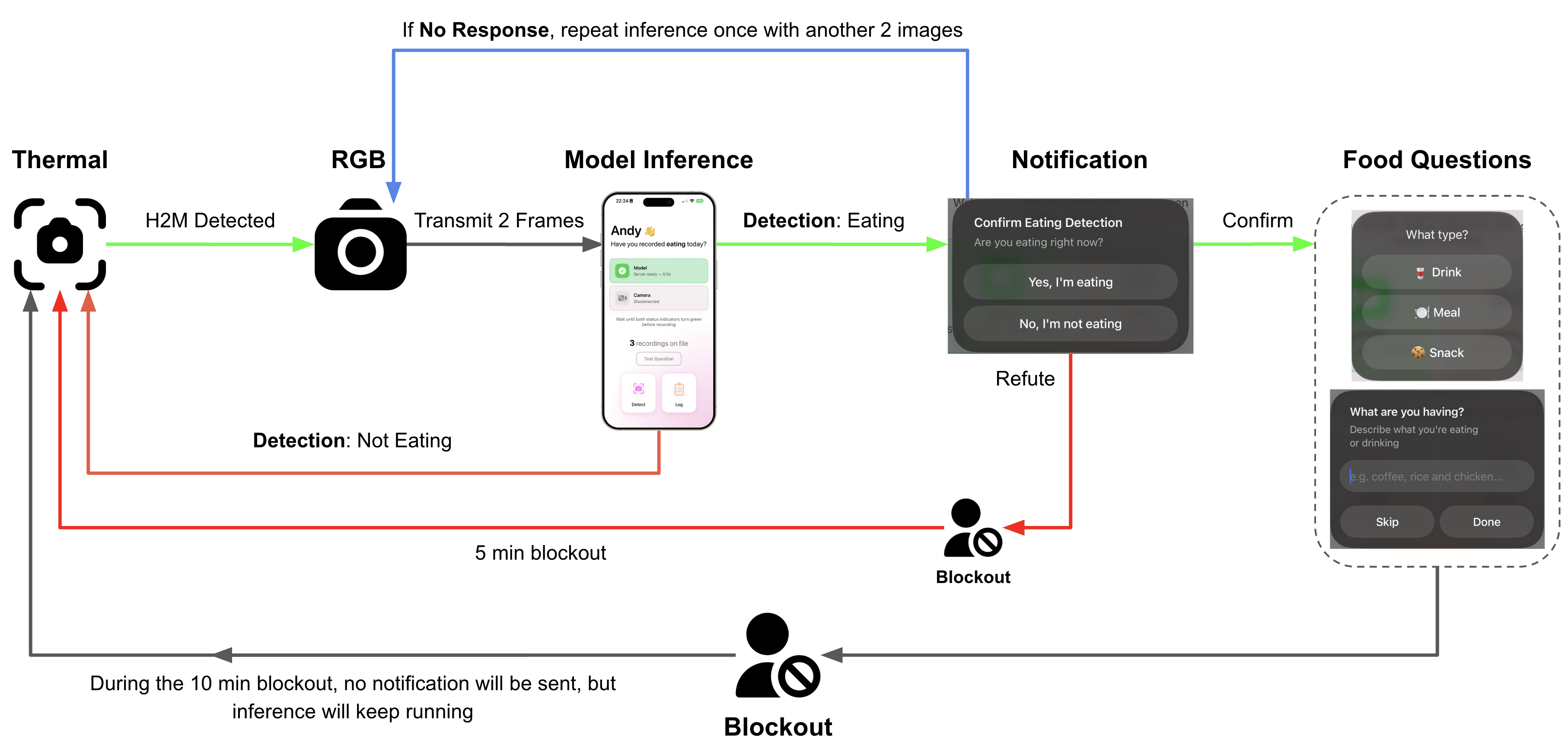

We present a multi-stage, resource-aware multimodal sensing framework for real-time eating detection and caloric estimation on embedded devices. The system introduces a thermal-triggered gating mechanism that selectively activates high-cost sensors (RGB and depth) only when candidate intake events are detected, significantly reducing energy consumption and unnecessary computation.

The gating module leverages temperature thresholding, connected-component analysis, and spatial centroid constraints to robustly identify hand-to-mouth events from low-resolution thermal data. Upon activation, a lightweight, NAS-optimized vision-language model processes RGB/depth inputs for fine-grained eating detection, while depth-based volumetric reconstruction estimates food portion size for caloric inference.

We integrate thermal (MLX90640) and RGB/depth streams into a unified pipeline and deploy the system on resource-constrained edge hardware (e.g., ESP32-class devices), enabling real-time inference with adaptive sensing. Experimental results demonstrate that the proposed framework achieves accurate intake detection and caloric estimation while significantly improving efficiency through selective sensor activation.

These findings highlight the potential of combining event-driven sensing, multimodal fusion, and model optimization to enable scalable, privacy-aware, and energy-efficient health monitoring in real-world environments.