Research

Research Statement

I design resource-efficient multimodal machine learning systems for real-world health sensing. My research focuses on enabling accurate, robust, and interpretable inference under strict on-device constraints (limited compute, power, and sensing bandwidth).

My work lies at the intersection of machine learning, embedded systems, and mobile health (mHealth), with three primary directions:

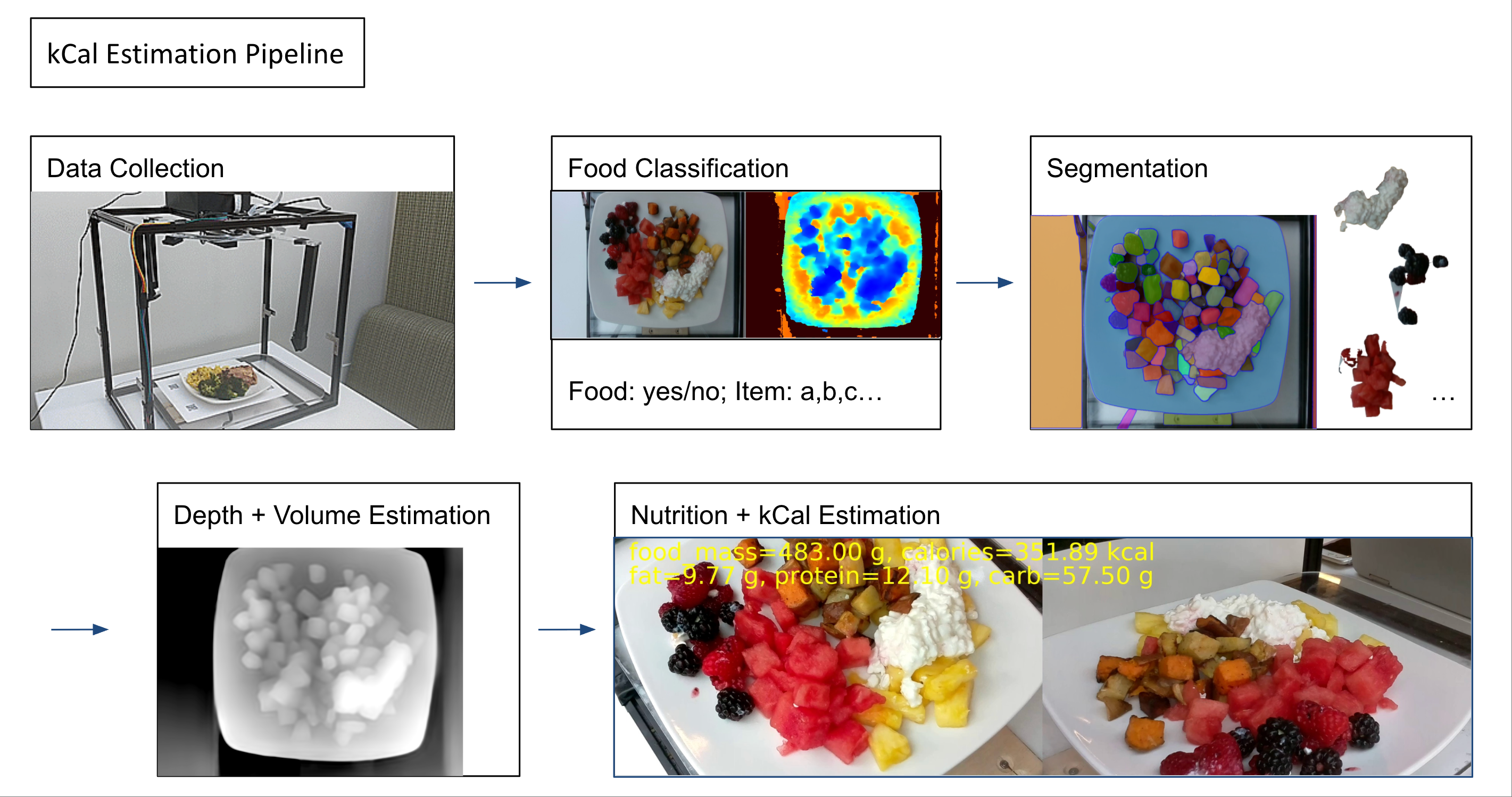

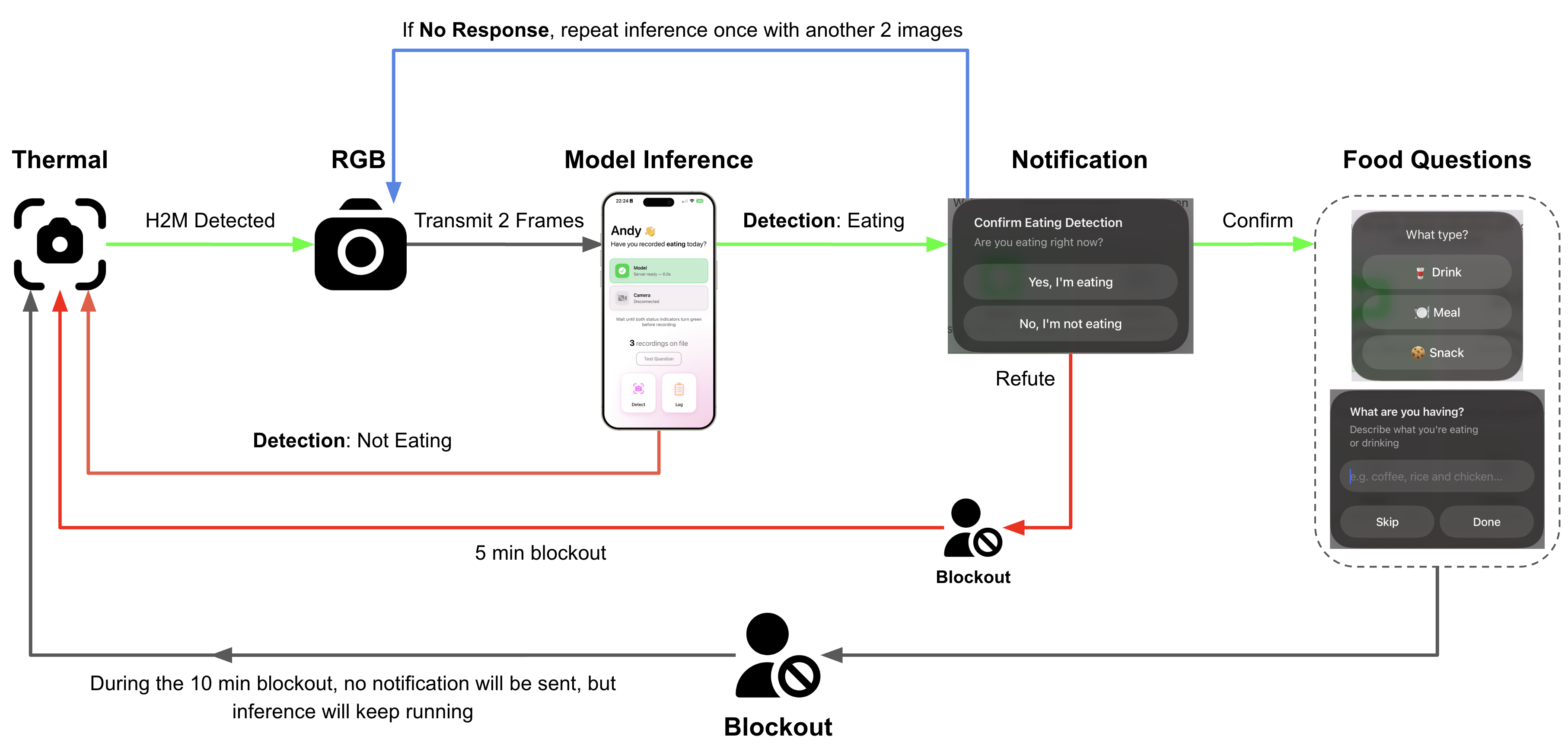

- On-device multimodal perception: developing adaptive sensing pipelines that integrate heterogeneous data streams (e.g., thermal, RGB/depth, IMU, physiological signals) while minimizing unnecessary computation.

- Efficient model design and deployment: leveraging techniques such as neural architecture search (NAS), lightweight deep models, and conditional computation to enable real-time inference on resource-constrained devices.

- Robust real-world modeling: building systems that generalize across users, environments, and sensing conditions, with an emphasis on interpretability and reliability in longitudinal deployments.

I have applied these principles to problems including eating behavior understanding, energy expenditure modeling, indoor localization, and stress monitoring, with a consistent focus on end-to-end system design—from sensing to inference to deployment.

Ongoing Projects